- Level Up Coding

- Posts

- LUC #22: Unpacking the Leading API Architectural Styles

LUC #22: Unpacking the Leading API Architectural Styles

Plus, how does Event-driven Architecture work, overview of the primary network protocols, and how tokenization works

Welcome back, fellow engineers! We're excited to share another issue of Level Up Coding’s newsletter with you.

In today’s issue:

Understanding The Most Prominent API Architectural Styles

How Does Tokenization Work? (Recap)

What is Event-driven Architecture? How Does it Work? (Recap)

Read time: 8 minutes

A big thank you to our partner Postman who keeps this newsletter free to the reader.

The power of AI has found its way to API management, with Postman leading the charge through their AI assistant, Postbot. Read more about it here!

Understanding The Most Prominent API Architectural Styles

API architectural styles determine how services communicate with each other. The existence of various API architectural styles stems from the diverse needs of applications, ranging from real-time data streaming to complex data retrieval and manipulation. The choice of an API architecture can have significant implications on the efficiency, flexibility, and robustness of an application, so it is very important to choose based on your application's requirements, not just what is often used. Let's dive into some of the most prominent API architectural styles and understand their unique offerings.

REST (Representational State Transfer)

We'll kick things off with REST, this API architectural style is at the heart of web services. REST focuses on leveraging the simplicity and universality of HTTP methods. Its stateless nature ensures scalability, while resource identification through URIs provides a clear structure. By using standard HTTP methods like GET, POST, PUT, and DELETE, REST offers a straightforward approach to CRUD operations, as well as a consistent API interface. The primary strength of REST lies in its simplicity, which enables building scalable and maintainable systems.

GraphQL

In some ways, GraphQL can feel like the opposite of REST. Contrary to the REST approach, which can necessitate multiple requests to obtain interconnected data, GraphQL provides a more streamlined method. Rather than having multiple endpoints for each resource or entity, it provides one endpoint and allows users to define their specific data requirements; in response, it efficiently delivers the requested data in a single query. Rather than over-fetching data, you get only what you require. This precision in data retrieval improves performance as well as user experience.

SOAP (Simple Object Access Protocol)

In the early days of web applications, SOAP was the dominant protocol. With the rise of REST offering much simpler JSON payloads over HTTP, SOAP popularity waned. Nonetheless, nowadays it's still prevalent in various enterprise systems requiring extensibility and robustness. SOAP is a protocol that emphasizes security, transactional integrity, and robust messaging patterns. Its XML-based message format and ability to operate over various transport protocols make it a versatile choice. Like REST, SOAP also has a stateless nature, and it also features its own security specification (WS-Security), which provides a suite of tools to ensure message integrity, confidentiality, and authentication.

gRPC (Remote Procedure Calls)

Backed by Google, gRPC is a modern RPC framework that uses Protocol Buffers for efficient serialization. It shines in microservices architectures, offering features like bidirectional streaming and multiplexing over a single connection. With support for multiple programming languages and built-in authentication mechanisms, gRPC is well-suited for a variety of use cases across different domains; from distributed systems and real-time applications to polyglot systems and IoT.

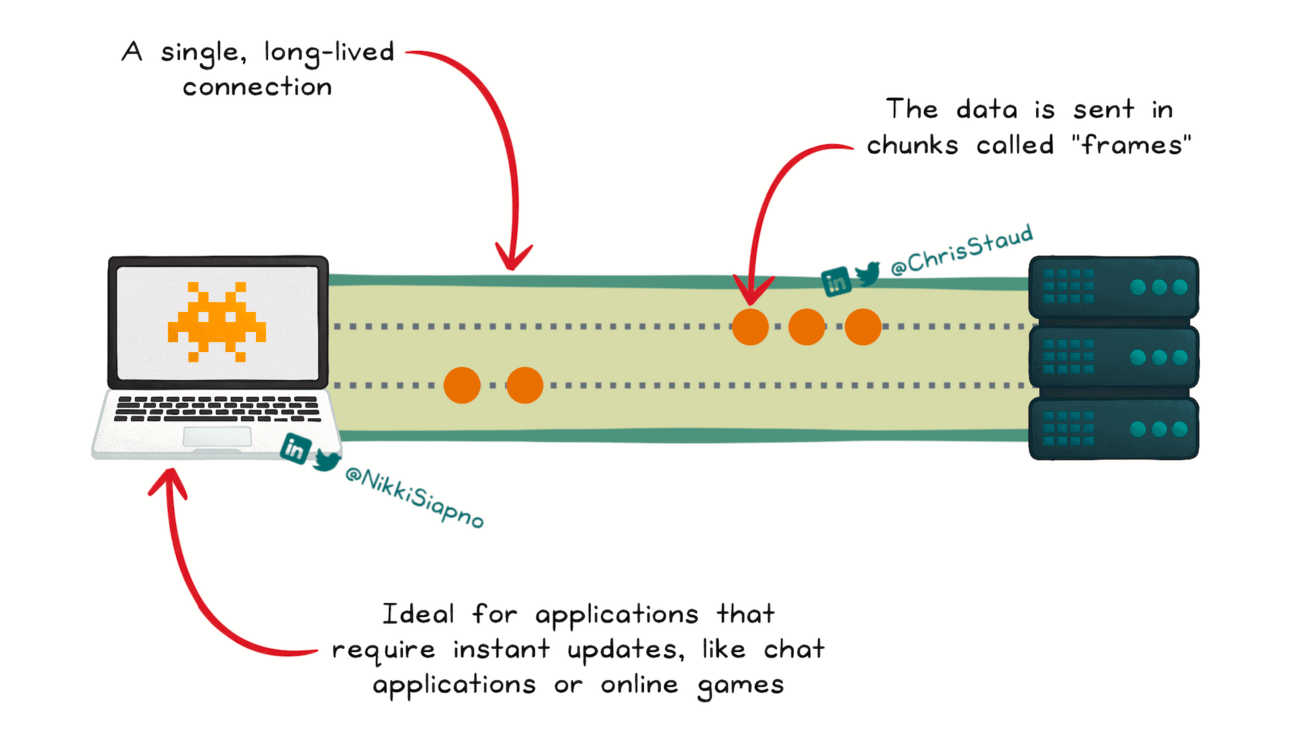

WebSockets

For applications demanding real-time communication, WebSockets provide a full-duplex communication channel over a single, long-lived connection. This allows both the client and the server to send messages at any time, independently of each other. This architectural style is especially popular in scenarios like online gaming, chat applications, and live financial trading, where low latency and continuous data exchange are paramount.

MQTT (Message Queuing Telemetry Transport)

MQTT was developed in the 90s by IBM. It was used in specific industries that required lightweight communication, but it’s widespread adoption occurred with the rise of the Internet of Things (IoT) landscape. MQTT is a lightweight messaging protocol optimized for high-latency or unreliable networks. Its publish/subscribe model ensures efficient data dissemination among a vast array of devices, making it a go-to choice for IoT applications.

API architectural styles are more than just communication protocols; they are strategic choices that influence the very fabric of application interactions. Just as an efficient database schema can elevate an application's performance and user experience, the right API architectural style can streamline service interactions, enhance scalability, and ensure data integrity. As technology continues to evolve, it's important to stay up to date with API architectures to make informed decisions that best serve an application's needs.

How Does Tokenization Work? (Recap)

Tokenization is a security technique that replaces sensitive information with unique placeholder values called tokens. By tokenizing your sensitive data, you can protect from unauthorized access and lessen the impact of data breaches, whilst simplifying the system by scaling back on security measures in other areas of the system.

🔹 Tokenization process

Sensitive data is sent to a tokenization service when it enters the system. There, a unique token is generated, and both the sensitive data and the token are kept in a secure database known as a token vault. For extra protection, the sensitive data is generally encrypted within the secure data storage. The token is then used in place of the sensitive data within the system and third-party integrations.

🔸 Detokenization process

When an authorized service requires sensitive data, it sends a request to the tokenization service that contains the token. The tokenization service validates that the requester has all the required permissions. If it does, it uses the token to get the sensitive data from the token vault and returns it to the authorized service.

What is Event-driven Architecture? How Does it Work? (Recap)

EDA is a software design pattern that emphasizes the production, detection, consumption of, and reaction to events. Adding an item to a shopping cart, liking a post, and paying a bill are all state changes that trigger a set of tasks in their respective systems.

EDA has four main components: events, producers, consumers, and channels.

Events: These are significant changes in state. They're generally immutable, typically lightweight and can carry a payload containing information about the change in state.

Producers: The role of a producer is to detect or cause a change in state, and then generate an event that represents this change.

Consumers: Consumers are the entities that are interested in and react to events. They subscribe to specific types of events and execute when those events occur.

Channels: Meanwhile, channels facilitate sending events between producers and consumers.

Overview of The Primary Network Protocols (Recap)

TCP/IP (Transmission Control Protocol/Internet Protocol): The underlying method of how information is passed between devices on the internet. While IP is responsible for addressing and routing data packets, TCP takes care of assembling the data into packets, as well as reliable delivery.

HTTP (Hypertext Transfer Protocol): Responsible for fetching and delivering web content from servers to end-users.

HTTPS (Hypertext Transfer Protocol Secure): An enhanced version of HTTP, HTTPS integrates security protocols (namely TLS) to encrypt data, providing secure and encrypted communication.

FTP (File Transfer Protocol): Used for transferring files (uploading and downloading) between computers on a network.

UDP (User Datagram Protocol): A more streamlined counterpart to TCP, UDP transmits data without the overhead of establishing a connection, leading to faster transmission but without the guarantee that the data will be delivered or in order.

SMTP (Simple Mail Transfer Protocol): SMTP manages the formatting, routing, and delivery of emails between mail servers.

SSH (Secure Shell): A cryptographic network protocol that ensures safe data transmission over an unsecured network. It provides a safe channel, making sure that hackers can't interpret the information by eavesdropping.

That wraps up this week’s issue of Level Up Coding’s newsletter!

Join us again next week where we’ll explore different types of databases and how to choose one, DDD architecture, and SemVer (semantic versioning).