Sync vs Async Clearly Explained

(5 minutes) | One design choice determines if your system blocks or flows.

The Aiven Free Tier Competition

Presented by Aiven

The best way to learn is by doing. Build with Aiven’s free tier services like Kafka and PostgreSQL. No credit cards, no expiring trials, just real, managed instances to help you get your project off the ground. Build, share, and win $1,000!

Synchronous vs Asynchronous Communication

Why does placing an order feel instant in one product and slow in another?

The difference is often invisible to users but fundamental to the system.

It comes down to how much of the checkout flow runs synchronously, waiting for every dependency to confirm success, versus how much runs asynchronously, allowing non-critical work to complete after the order is accepted.

The only difference that matters: do you wait?

Imagine clicking “Purchase”.

Behind that button, the system fans out to payments, inventory, shipping, email, analytics, and fraud checks.

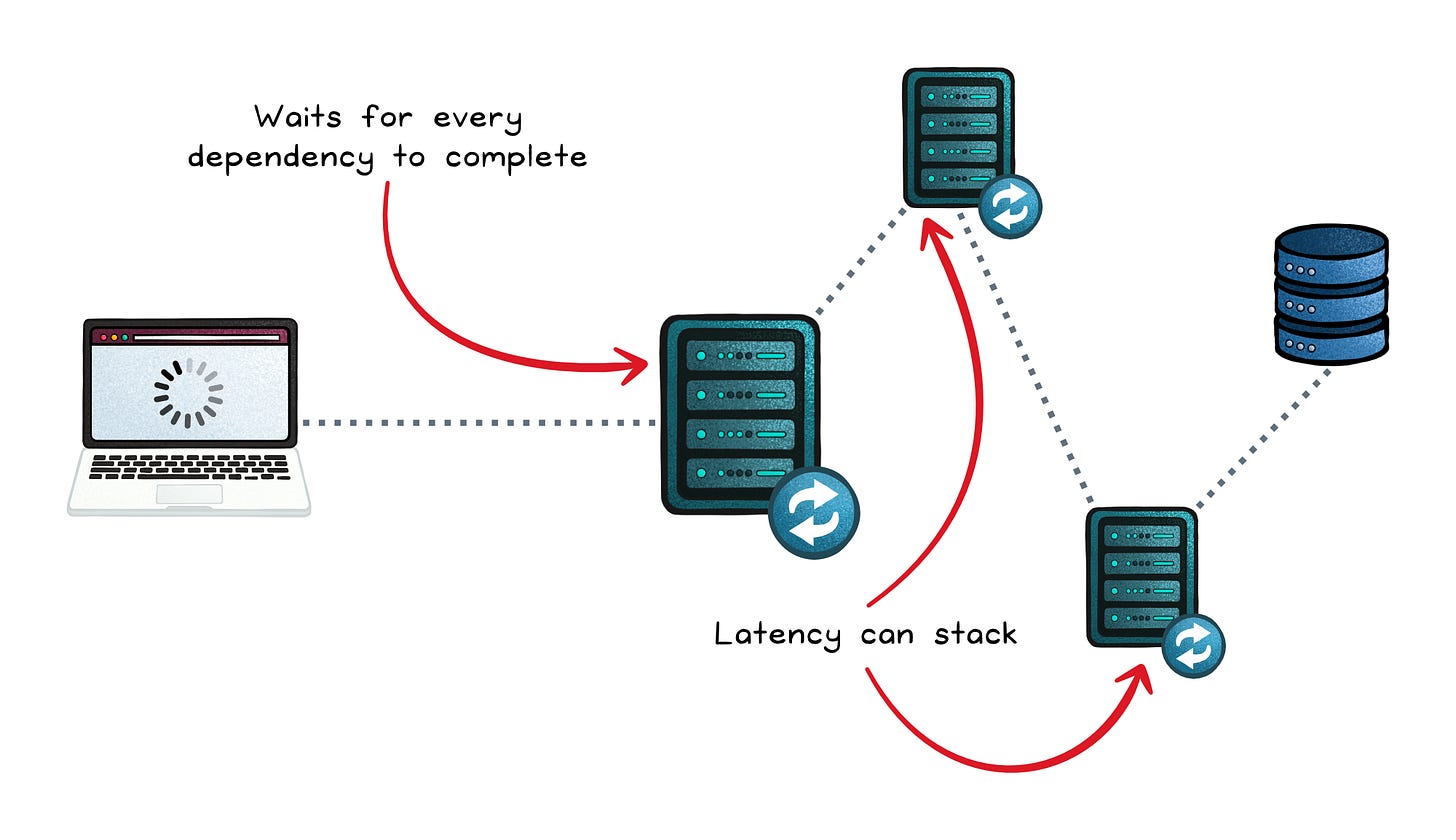

In a synchronous design, the checkout flow waits.

The order service calls each dependency and blocks until every one responds. If payment is slow or the email service hiccups, the user stares at a spinner. Nothing moves forward until the last response comes back.

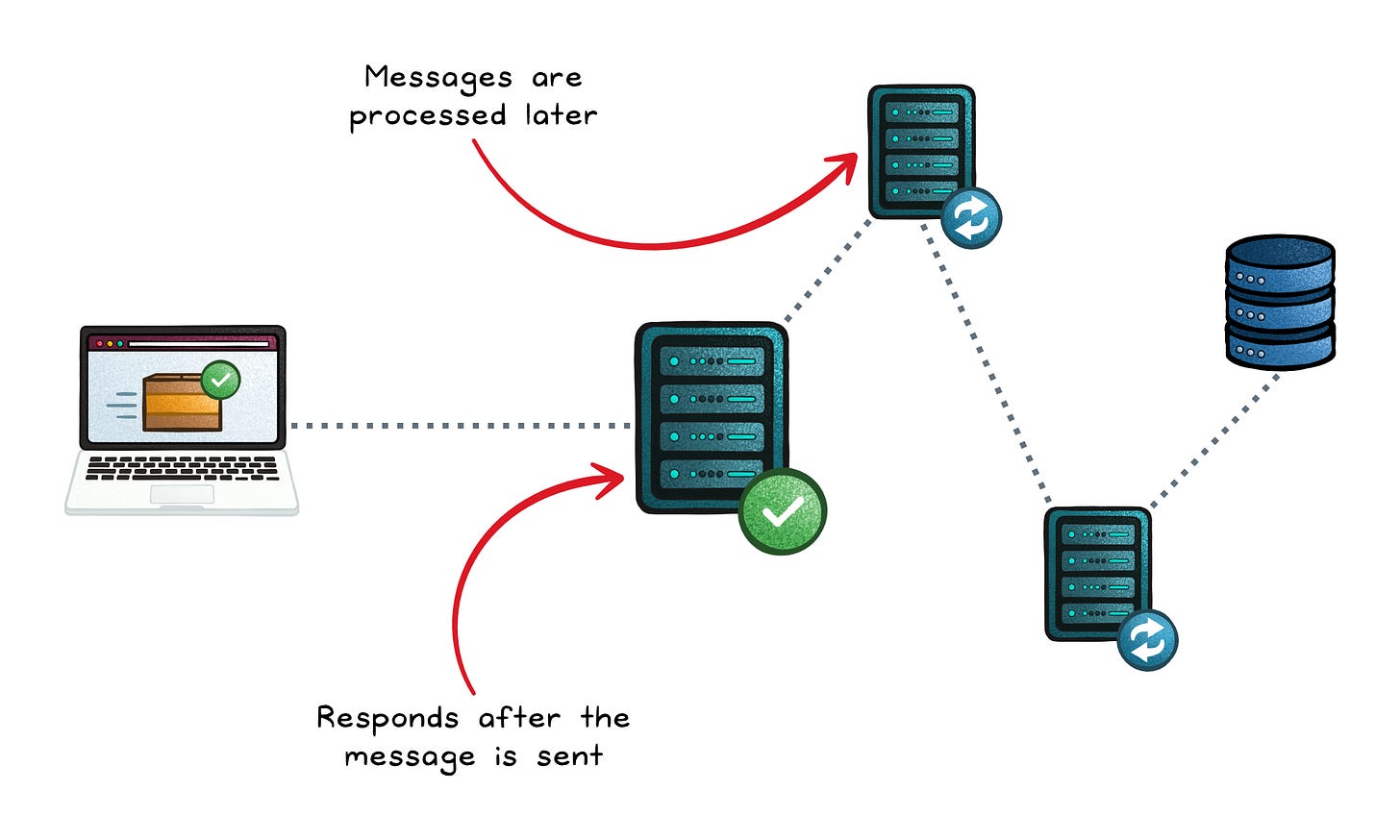

In an asynchronous design, the order is accepted first.

The system records the order, responds to the user, and emits messages for the rest of the work. Payment confirmation, receipts, analytics, and fulfillment happen independently, often through a queue or event bus, without holding the checkout flow hostage.

That’s the real distinction.

Synchronous communication means sending a request and waiting for a reply before continuing.

Asynchronous communication means sending a message and moving on, trusting the system to finish the work later.

Synchronous communication: wait for the response

Synchronous calls feel clean because the control flow stays linear:

Inline response → you get a result (or error) right away, in the same execution path.

Known outcome on return → the caller knows whether it succeeded as soon as the call finishes.

User-facing fit → logins, reads, validations, and short operations match the “user is waiting” model.

But synchronous has temporal coupling (dependency on being up at the same time). If the downstream service slows down or fails, the caller waits, times out, or fails too; often turning one slow component into a platform-wide incident.

It also stacks latency. A single call can be fast, but a chain of calls is serial: A waits for B, then B waits for C, and the user waits for all of it. Under load, threads pile up waiting and throughput collapses.

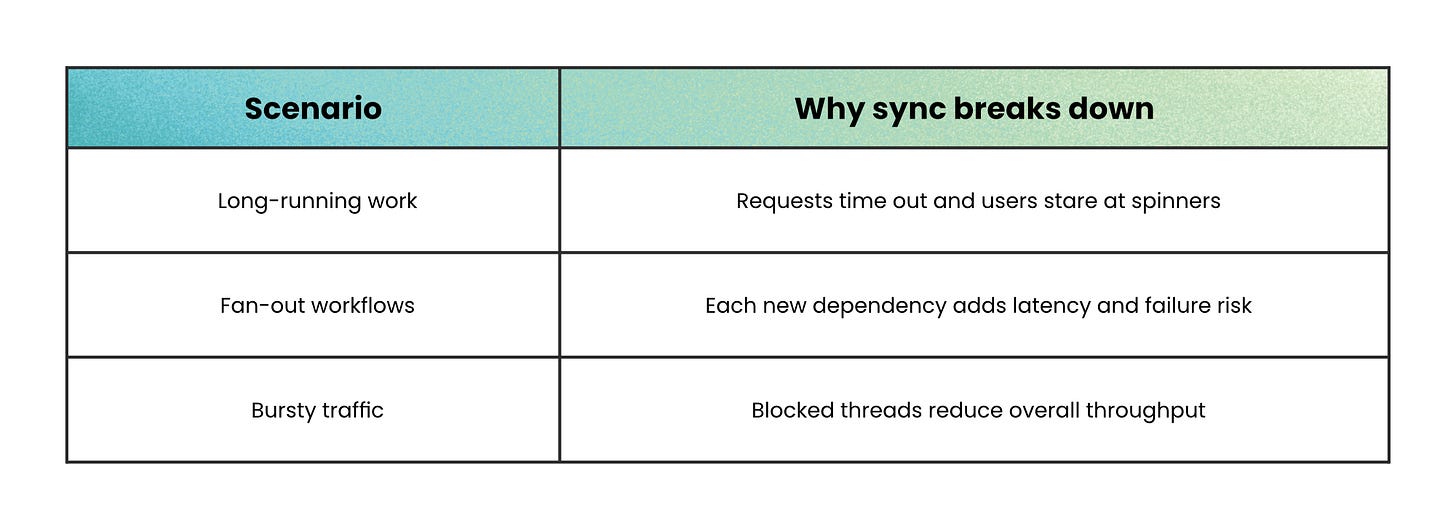

When not to use synchronous communication

Asynchronous communication: send and continue

Asynchronous systems usually introduce a message broker (a system that stores and routes messages) and communicate via queues or topics. Producers publish; consumers process when they can.

This changes the system’s structure:

Decoupling & resilience → messages can wait when a consumer is slow or offline, reducing cascading failures.

Throughput & scalability → producers don’t block, and you can scale consumers horizontally to chew through backlog.

Fan-out by default → one “order placed” event can trigger email, analytics, fraud checks, and inventory updates without the producer calling each service directly.

The trade is eventual consistency: the system acknowledges the request now, but the final outcome happens later. How long it takes depends on how many messages are waiting and how fast consumers process them.

Async also raises the engineering bar:

More moving parts → brokers, persistence, acknowledgements, retries.

Idempotency required → consumers must safely handle duplicates (idempotent means “running it twice produces the same effect as once”).

Harder failure handling → errors may surface later, sometimes via a dead-letter queue (messages that repeatedly fail and need inspection).

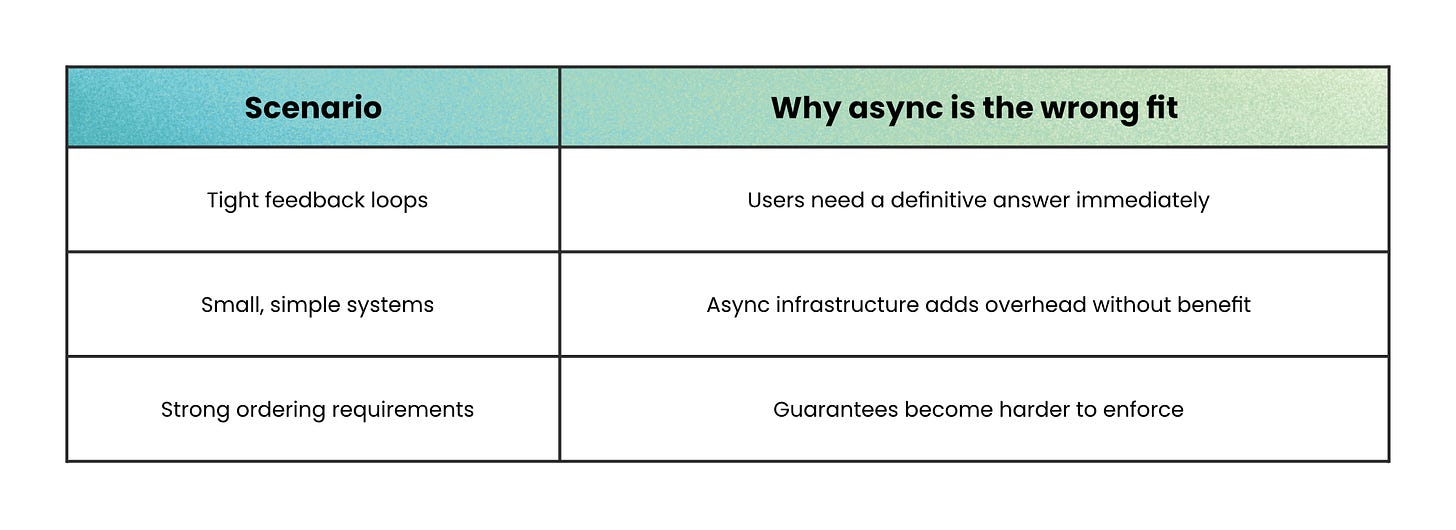

When not to use asynchronous communication

How to choose in practice

Most real systems don’t pick one style.

They place synchronous and asynchronous communication deliberately.

Use synchronous communication when the system cannot move forward without a definitive answer.

This includes login checks, payment authorization, inventory validation, and critical reads. In these cases, waiting is the point because correctness matters more than throughput. Failures should surface immediately and stop the flow.

Use asynchronous communication when the work does not need to block the user.

Long-running tasks, bursty workloads, and fan-out behavior belong here. Queuing the work keeps the system responsive and prevents one slow dependency from stalling everything else.

This is how systems gain autonomy and absorb partial failure.

Most mature architectures follow a simple pattern:

Sync at the edge → confirm the user’s action quickly and clearly.

Async behind the edge → process side effects like notifications, analytics, and downstream updates without holding the request open.

That split keeps the user experience fast while letting the system scale and recover under load.

One important caveat: “async code” is not the same as asynchronous communication.

If a service must wait for a response before it can proceed, the interaction is still synchronous at the architecture level; even if it uses promises, callbacks, or non-blocking I/O.

Wrapping up

Go back to the moment the user clicks “Purchase.”

Whether that action feels instant or slow is a design choice.

Payment authorization, inventory checks, and order creation run synchronously because the system needs a definitive answer.

Waiting there is intentional. That’s the critical path.

Everything else (emails, analytics, fraud checks, fulfillment) doesn’t need to block the user. Those steps run asynchronously so the order can complete even when downstream systems are slow or unavailable.

That’s the real trade-off.

Synchronous communication gives you immediate certainty but shared failure. Asynchronous communication gives you resilience and throughput by letting work finish later.

Well-designed systems use both.

If a user flow feels slow, the fix isn’t always faster processing.

Often, it’s deciding what truly needs an answer now; and what can wait.

👋 If you liked this post → Like + Restack + Share to help others learn system design.

Subscribe to get high-signal, clear, and visual system design breakdowns straight to your inbox:

Great write-up. It is one of those fundamentals that quietly shapes everything, and you explained it without the usual confusion.

Very helpful thank you!